Making space for emotion in our work with AI

On 5 June, I will be speaking at the Royal Ballet and Opera's RBO/SHIFT 2026 festival in London, as part of Friday's SHIFT EXCHANGE: a one-day programme exploring the ideas, tensions and creative breakthroughs shaping the future of artificial intelligence in the arts.

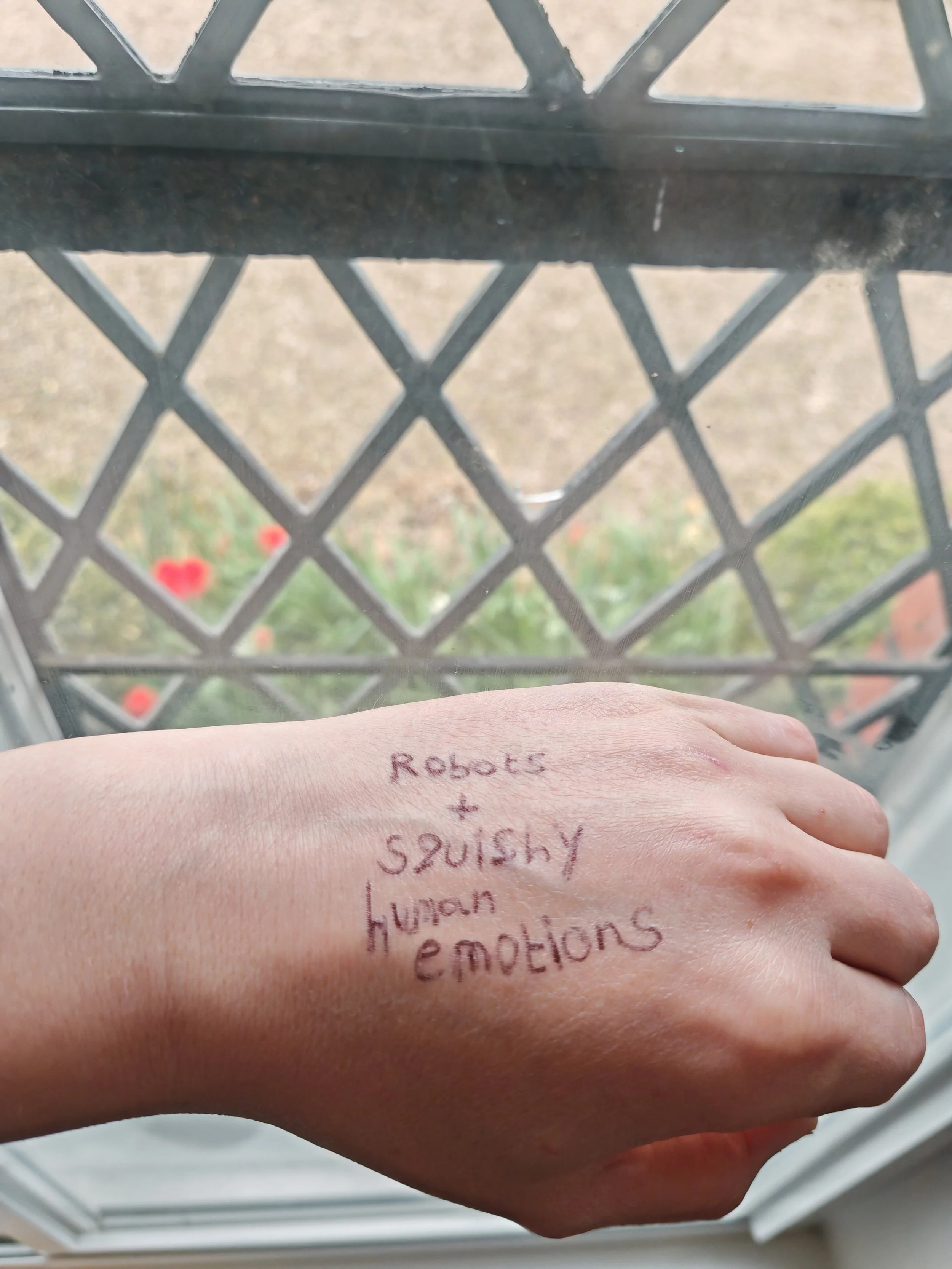

My talk is called My Most Emotional Moments in AI (And What They Taught Me). It will share some of the experiences that have most affected me while exploring AI and working with artists, cultural organisations and creative teams.

Ahead of the talk, I want to set out why emotion has become so central to how I think about AI, and why I believe the arts and culture sector has a particular role to play in claiming it.

Why emotion gets left out

When we talk about AI in professional settings, we tend to reach for a particular kind of language. We talk about efficiency, key performance indicators, productivity gains, use cases. The vocabulary is quantitative and, I generally feel, deliberately neutralised. Some of this is inherited from technology itself, where a bias towards measurement and scientific framing carry institutional weight. Some of it is inherited from business, where the language of metrics is deeply embedded as a normalised way to understand value.

What gets squeezed out of that conversation is emotion. Sometimes it is left out by accident. More often, I think, it is left out on purpose. Emotion can be treated as silly, soft, or off-topic. The pressure to sound professional often pushes people to translate a genuine response into something more measurable, even when the measurable version says less.

I have come to believe this is a real loss, particularly for people working in arts and culture.

Emotion as a signpost

Emotion is one of the most useful tools we have for understanding what matters to us. It tells us where to slow down, where to look more closely, where to ask harder questions. When something affects us, we can often trace that response back to a value we hold or an assumption we did not know we were making. When we strip emotion out of how we discuss AI, we lose access to that signposting. We end up asking what is most efficient rather than what is most important.

There is also something about reclaiming our place as humans using technology. We are individuals, and the way each of us uses (or chooses not use) emerging AI capabilities is going to be distinct. Our emotional responses are tied to our psychology and our practice. They are part of how we work out what we want from a piece of technology, and what we want to refuse from it.

Moments that have stayed with me

In choosing the title of my talk, I was thinking about specific moments that have stayed with me.

Some of these have been difficult. Watching the work of a professional creative being affected by AI, in a way that I cannot argue with on a practical level, gives me real pause. So does seeing AI products designed for people who are particularly vulnerable, who rely on accessibility tools or on emotional support, when those products are being shaped by commercial incentives rather than care for the people using them.

Other moments have been quietly remarkable. I have seen someone use AI to work through trauma by building a computer game around it. I have seen people recreate fragments of childhood memory and have honest conversations with their families about what those memories brought up. I have used AI myself to think more deeply about my grandmother's life, what it might have been like for her growing up, the adventures and stories she had during the Second World War. Each of these stayed with me because the technology and the humanity were so completely wrapped up in each other.

What I see in workshops

Across more than a hundred workshops with arts and culture organisations, I have noticed a recurring pattern. People generally arrive ready to translate their reactions into the quantitative language they think is expected of them. Once a session feels safe enough, the emotional responses surface, and they are rarely uniform. I have walked into rooms expecting consensus and left understanding that a piece of work or a tool had landed completely differently for different people.

That variation is brilliant, and I think underlines something really important. Our variation of emotion response shapes how different people learn, and it shapes what they will go on to explore. Different emotional responses produce different questions, different specialisms, different lines of inquiry. This is part of why I now begin every session with emotion. I check in on how people are feeling. I try to bring in threads from other teams I have worked with so participants are learning alongside the wider sector rather than in isolation. The strongest sessions tend to begin with what people actually feel and react to, and move outward from there.

A capacity arts and culture already has

Arts and culture professionals are, in my experience, particularly well placed for this kind of work. The sector is comfortable with the language of emotion, subtlety, representation and fiction. It values these things in the work it produces and in how it operates internally. That comfort is a real strength when approaching technology that often arrives wrapped in neutralised, quantitative framing.

Sometimes I see people in the sector feel slightly embarrassed about leaning on this strength, as if it might be too soft for a conversation about AI. I would encourage the opposite. It is exactly the kind of capacity the wider conversation about this technology needs more of.

Vulnerability as the through line

Last year, I wrote for The Audience Agency about why I think AI innovation in culture is impossible without vulnerability. Vulnerability and emotion are closely tied. It takes vulnerability to admit that a tool you have been told is transformative does not actually seem to work for your context, or that you find a particular application of AI distressing for reasons you are still working out. It also takes vulnerability to share an unexpectedly positive response, or a creative experiment you are not sure other people will take seriously. Both kinds of vulnerability lead to better conversations, and better conversations are where useful innovation tends to come from.

What becomes possible

When people are allowed to be honest about fear, confusion, scepticism and excitement, the conversations open up. AI starts to feel like a real part of their practice, something to engage with in the same way they engage with the artists they work with, the audiences they serve, and the society they sit within.

We are in a period where public feeling about AI is genuinely varied and often strong. As of writing, over 200,000 people have signed an open letter opposing Palantir's role in the NHS. People feel deeply about how this technology is being deployed, who is deploying it, and the biases which will affect that deployment. AI is an exceptionally emotionally-charged subject, and ignoring that within our learning or curiosity seems unnecessarily harmful.

One central message

If there is one message I want to carry into the RBO/SHIFT talk, and into the broader argument I am working through, it is that emotion is something we can claim and should claim in our work with AI. It makes the work more human-centric. It makes conversations richer. It supports different areas of specialism. And it prepares us for the kind of complicated and evolving relationship with AI that many in arts and culture will inevitably have.

For me, that complexity is the work itself, and emotion is part of how we navigate it.

Jocelyn will be speaking at the Royal Ballet and Opera's RBO/SHIFT 2026 festival on 5 June 2026 in London, as part of Friday's SHIFT EXCHANGE programme. More information on the festival website.